Enterprise AI conversations have changed dramatically over the last year.

A short while ago, most discussions revolved around model capabilities. Businesses were fascinated by how large language models could generate content, summarize information, write code, or answer questions conversationally. Now the conversation is becoming more operational.

Companies are asking a much more practical question –

How do we make AI systems work reliably with real business knowledge? That shift is precisely why retrieval-augmented generation, or RAG, has become one of the most important architectural patterns in modern AI development.

For many organizations, the first instinct is to assume that fine-tuning is the answer. If a model needs company knowledge, the natural assumption is that the model should simply be trained on internal data. In practice, enterprise systems rarely behave that neatly. Business knowledge changes constantly. Internal documentation evolves every week. Compliance policies get updated. Product specifications shift. Operational workflows change across teams. Support information grows continuously. In large organizations, knowledge is rarely centralized in one clean repository either. It lives across CRMs, PDFs, internal wikis, cloud storage systems, ticketing platforms, spreadsheets, and legacy databases.

This is where many AI initiatives hit their first architectural reality.

The challenge is often not the intelligence of the model itself. The challenge is giving the model access to accurate, current, and governed information without creating an operational maintenance nightmare.

That is exactly where retrieval-based architectures begin outperforming fine-tuning.

RAG systems approach the problem differently. Instead of attempting to permanently train enterprise knowledge into the model, they retrieve relevant information dynamically during runtime and provide that context to the model before a response is generated. That single shift changes the economics, scalability, maintainability, and reliability of enterprise AI systems significantly. It is also one of the biggest reasons many organizations are now prioritizing retrieval-oriented architectures before exploring large-scale fine-tuning efforts.

Why businesses initially overestimate fine-tuning

Fine-tuning has become one of the most misunderstood concepts in enterprise AI. Part of the confusion comes from how people intuitively think AI systems should work.

If an organization wants an AI assistant that understands internal workflows, product information, or compliance documentation, training the model on that information sounds logical. The assumption is simple: once the model learns the data, the problem is solved.

But fine-tuning is not really designed to function as a continuously updated enterprise knowledge storage system.

Fine-tuning primarily changes model behavior.

It helps models adapt to –

- tone

- style

- domain-specific terminology

- structured outputs

- classification patterns

- specialized tasks

What it does not solve efficiently is rapidly evolving knowledge.

Imagine an enterprise support organization where –

- product features change frequently

- troubleshooting procedures evolve monthly

- compliance policies update quarterly

- pricing documentation changes regularly

- customer onboarding workflows continuously improve

A fine-tuned model can quickly become outdated in environments like these. Keeping the model current would require repeated retraining cycles, evaluation processes, redeployments, and infrastructure overhead.

At smaller scales this may appear manageable. At enterprise scale, it often becomes operationally expensive and unnecessarily rigid.

This is one of the reasons retrieval-based systems are gaining momentum so quickly.

They separate knowledge access from model behavior, following the retrieval architecture approach described in Microsoft’s RAG implementation guide.

That distinction is subtle, but extremely important.

What retrieval-augmented generation actually changes

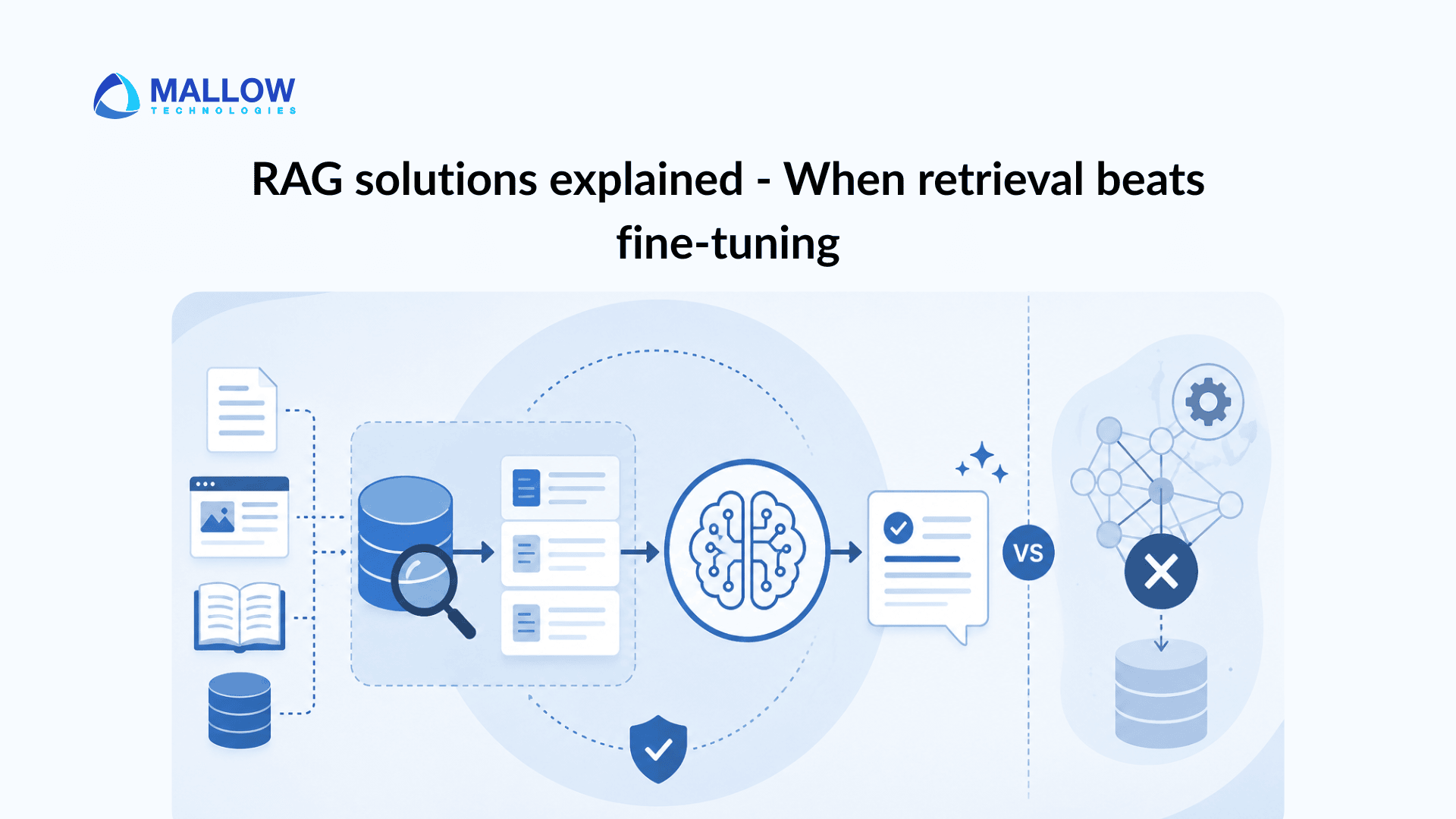

Retrieval-augmented generation changes the way AI systems access information. Instead of expecting the model to memorize all enterprise knowledge internally, the system retrieves relevant information dynamically during runtime.

The workflow is conceptually straightforward. When a user submits a query, the system first searches external knowledge sources for information that appears contextually relevant. That retrieved information is then injected into the model context before the model generates its response.

The result is a system that behaves less like a static pretrained model and more like an intelligent reasoning layer connected to live organizational knowledge.

That architectural change has major implications.

For one, knowledge becomes significantly easier to maintain.

If documentation changes, organizations usually do not need to retrain the model. They simply update the underlying data source or retrieval index.

That reduces operational friction considerably. It also improves information freshness.

Traditional large language models only know what existed in their training data. Retrieval systems, on the other hand, can access newly updated enterprise information almost immediately after indexing. In fast-moving operational environments, that difference matters far more than many businesses initially realize.

Why RAG is becoming the default enterprise AI architecture

A major reason enterprises are moving toward RAG systems is that enterprise knowledge environments are inherently dynamic.

Very few organizations operate with perfectly stable information systems.

Documentation evolves constantly.

Policies change.

Internal procedures shift between departments.

Customer data updates continuously.

Even small operational modifications can create inconsistencies across workflows. Retrieval architectures handle this volatility much better than static training approaches. Consider a customer support organization managing thousands of knowledge articles. With a retrieval-based system, new troubleshooting documentation can be indexed immediately and made accessible to the AI assistant without retraining the model. A fine-tuned architecture would typically require retraining workflows to reflect the same changes accurately.

At enterprise scale, that difference creates a significant operational advantage.

This is one reason retrieval systems are increasingly powering –

- enterprise search platforms

- internal knowledge assistants

- support automation systems

- compliance workflows

- AI copilots

- operational intelligence tools

The goal is no longer simply generating text.

The goal is generating contextually grounded responses tied to current enterprise knowledge. Product and engineering teams exploring broader enterprise AI adoption can also read our article on where GenAI solutions are already delivering measurable value across product and platform workflows.

The operational advantage of retrieval-based AI systems

One of the biggest benefits of retrieval systems is flexibility.

Enterprise AI initiatives rarely stay confined to their original scope.

A company may initially build an internal knowledge assistant for one department and later expand the system across –

- operations

- customer support

- HR

- compliance

- engineering

- procurement

Retrieval architectures generally adapt more easily to this kind of growth.

New knowledge repositories can be connected incrementally. Additional workflows can be layered into the retrieval pipeline. Access controls can evolve gradually.

The architecture remains modular.

That modularity becomes extremely valuable once AI systems begin interacting with real operational environments.

This is also where retrieval systems start outperforming simplistic chatbot implementations.

Many chatbot systems appear effective in controlled demonstrations because the scope is artificially narrow. Once users begin asking questions tied to constantly evolving operational data, performance often deteriorates quickly. Retrieval systems reduce this problem by grounding responses in live organizational knowledge. That grounding layer is becoming one of the defining characteristics of modern enterprise AI.

How RAG systems actually work behind the scenes

Although RAG architectures sound conceptually simple, production implementations involve several moving parts working together.

The process typically begins with embeddings.

Embeddings convert text into numerical vector representations that capture semantic meaning rather than relying only on keyword matching.

This allows retrieval systems to identify contextually similar information even when wording differs.

Those vectors are stored inside vector databases through platforms like Pinecone or other enterprise retrieval infrastructure solutions.

When users submit a query, the system converts that query into embeddings and searches for semantically relevant information. The retrieved context is then injected into the model prompt before response generation.

What sounds simple at a high level becomes significantly more nuanced in production.

Strong retrieval quality depends heavily on –

- document chunking strategies

- metadata filtering

- semantic ranking

- relevance optimization

- retrieval latency

- context window management

This is why production-grade RAG systems require considerably more engineering depth than many organizations initially expect. Businesses evaluating enterprise AI implementation can also explore our guide explaining how to assess technical depth, scalability thinking, and operational maturity in AI development partners. Poor retrieval quality can degrade AI performance quickly. If irrelevant context enters the model window, response quality drops. If critical information is missed, hallucinations increase. In many enterprise systems, retrieval quality becomes just as important as model quality itself.

Where fine-tuning still makes sense

The growing popularity of RAG does not mean fine-tuning has become irrelevant. In fact, some of the strongest enterprise AI systems combine both approaches intentionally.

Fine-tuning remains valuable when businesses need –

- highly specialized behavioral adaptation

- domain-specific language handling

- structured output consistency

- classification optimization

- latency-sensitive deployment patterns

For example, industries such as healthcare, finance, and legal services often involve specialized terminology and communication structures that benefit from targeted model adaptation. Similarly, applications requiring highly predictable output formatting may still benefit from fine-tuning workflows. The important distinction is understanding what problem fine-tuning is actually solving. If the primary challenge is keeping enterprise knowledge current and accessible, retrieval is often the better architectural solution.

If the challenge is behavioral specialization or output consistency, fine-tuning may still provide strong value.

The mistake many businesses make is treating both approaches as interchangeable. They are not.

Why governance and explainability matter more in enterprise AI

As AI systems become operationally embedded into enterprise workflows, governance concerns become impossible to ignore.

This is another area where retrieval architectures often provide practical advantages.

Retrieval systems can expose source references tied to generated responses.

That capability becomes extremely important in environments involving –

- compliance

- audits

- policy interpretation

- regulated workflows

- operational accountability

Fine-tuned models generally provide far less visibility into where specific outputs originated.

For enterprises operating in regulated industries, explainability increasingly matters as much as response quality.

Security considerations are also becoming significantly more important.

Enterprise retrieval systems frequently interact with sensitive internal information, which means governance planning must include –

- access control systems

- permission-aware retrieval

- audit logging

- encryption policies

- retrieval monitoring

Frameworks such as the OWASP LLM Security Guidance continue highlighting risks associated with large language model deployments, particularly around prompt injection and sensitive data exposure.

Strong enterprise RAG systems therefore require much more than simply connecting a model to a document repository.

The biggest mistakes businesses make when building RAG systems

One reason many early RAG implementations struggle is because businesses underestimate retrieval complexity.

The phrase “connect your documents to AI” has made retrieval architectures sound deceptively simple.

Production systems are not simple.

A retrieval pipeline is only as effective as the quality of its –

- indexing strategy

- chunking structure

- metadata architecture

- relevance tuning

- governance implementation

Poor chunking alone can severely reduce system performance. If chunks are too large, retrieval becomes noisy. If they are too fragmented, context disappears. Similarly, weak semantic ranking pipelines often surface irrelevant information, which causes downstream hallucinations even when the model itself is highly capable. Another common mistake is ignoring long-term operational maintenance.

Enterprise retrieval systems require ongoing –

- observability

- retrieval evaluation

- indexing optimization

- access control management

- infrastructure monitoring

RAG systems are not “set it and forget it” architectures.

They are operational systems that evolve alongside enterprise knowledge.

The future of enterprise AI will likely be retrieval-centric

The broader enterprise AI market is gradually moving toward hybrid architectures where retrieval and fine-tuning complement one another instead of competing, a trend also highlighted in McKinsey’s research on the evolving enterprise AI landscape.

Retrieval handles –

- knowledge access

- contextual grounding

- information freshness

- explainability

Fine-tuning handles –

- behavioral adaptation

- domain optimization

- output consistency

As AI agents become more sophisticated and workflow orchestration becomes more important, retrieval-oriented architectures will likely form the foundation of many enterprise AI ecosystems. Businesses exploring orchestration-heavy AI environments can also understand how multiple AI systems coordinate planning, execution, and operational handoffs. This is especially true for organizations operating across fragmented knowledge environments where information changes continuously. The long-term value of AI will not come solely from larger models.

It will come from systems capable of interacting intelligently with live operational knowledge. That is precisely why retrieval is becoming such a critical architectural layer.

How Mallow helps businesses build enterprise RAG solutions

At Mallow, we help organizations design retrieval-oriented AI systems that align with real operational workflows, enterprise infrastructure, and long-term scalability requirements.

Our engineering teams work across –

- retrieval pipeline architecture

- vector database integration

- workflow orchestration

- cloud-native AI infrastructure

- enterprise system connectivity

- observability implementation

- governance and security planning

Because enterprise AI systems extend far beyond model integration alone, our approach focuses heavily on operational reliability, maintainability, and scalability from the beginning of the implementation lifecycle.

Whether businesses are evaluating –

- enterprise knowledge assistants

- AI-powered workflow systems

- retrieval-based copilots

- hybrid RAG architectures

- fine-tuning strategies

we help identify the right architectural approach based on operational complexity, infrastructure maturity, and business objectives.

If you are exploring enterprise RAG solutions or evaluating the right AI architecture for your business, feel free to reach out to us.

Your queries, our answers

Retrieval-augmented generation is an AI architecture that retrieves external information dynamically during response generation instead of relying entirely on the model’s training data.

RAG generally performs better when enterprise knowledge changes frequently, information exists across multiple systems, or businesses require explainability and scalable knowledge access.

No. However, retrieval systems can significantly reduce hallucinations by grounding responses using retrieved enterprise knowledge instead of relying only on pretrained model memory.

Yes. Fine-tuning remains valuable for behavioral adaptation, specialized language handling, structured outputs, and certain low-latency applications.

Yes. Many enterprise AI architectures now combine retrieval systems for knowledge grounding with fine-tuning for behavioral optimization and domain specialization.

What happens after you fill-up the form?

Request a consultation

By completely filling out the form, you'll be able to book a meeting at a time that suits you. After booking the meeting, you'll receive two emails - a booking confirmation email and an email from the member of our team you'll be meeting that will help you prepare for the call.

Speak with our experts

During the consultation, we will listen to your questions and challenges, and provide personalised guidance and actionable recommendations to address your specific needs.

Author

Jayaprakash

Jayaprakash is an accomplished technical manager at Mallow, with a passion for software development and a penchant for delivering exceptional results. With several years of experience in the industry, Jayaprakash has honed his skills in leading cross-functional teams, driving technical innovation, and delivering high-quality solutions to clients. As a technical manager, Jayaprakash is known for his exceptional leadership qualities and his ability to inspire and motivate his team members. He excels at fostering a collaborative and innovative work environment, empowering individuals to reach their full potential and achieve collective goals. During his leisure time, he finds joy in cherishing moments with his kids and indulging in Netflix entertainment.